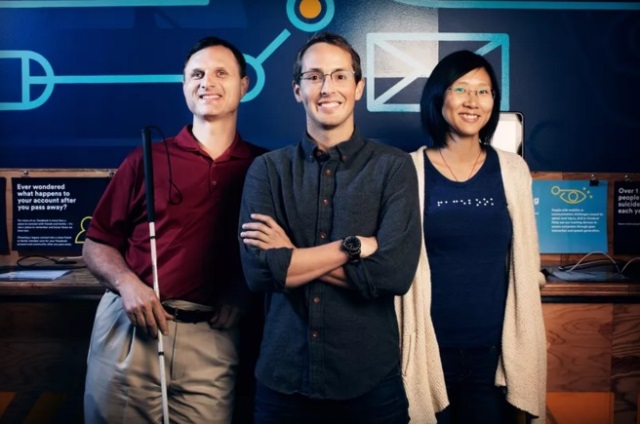

Facebook accessibility team members(from left to right) Matt King, Jeff Wieland, and Shaomei Wu

Facebook has begun using artificial intelligence to automatically describe the content of a certain photo to the blind and visually impaired users.

Starting today, the tool called Automatic Alternative Text will describe details from text-to-speech engines. The 5-year-old accessibility team of Facebook lead by Jeff Weiland built the engine with an Artificial Intelligence that can recognize objects in photos by using algorithms in making the predictions.

Automatic ALT Text identifies Facebook Photos and uses the iPhone’s Voice-Over feature to read the description out loud to users. Whilst in its early stages, it can already recognize in categories including transportation, nature, sports, and food. In addition to that, it can identify selfies and pick out particular characteristics, including smiles, beards, and eyeglasses.

A prototype was shown by Matt King, A blind Facebook employee and acknowledged that the FB service was far from perfect and was a notable improvement over the status quo. Automatic ALT Text is now available on iOS but will later roll out to Android system.